You are currently browsing the category archive for the ‘History’ category.

Western public culture has no trouble speaking in the language of conquest, so long as the conqueror is European. We can list the crimes of empire in a catechism: invasion, extraction, settlement, forced conversion, slavery, and the slow grinding down of local institutions. We teach it. We ritualize it. We build moral identity around denouncing it.

But history did not outsource conquest to Europe.

From the 7th century onward, Muslim-ruled polities participated in a major, long-running arc of territorial expansion: the early Arab conquests across the Levant, Egypt, North Africa, and Persia; the push into Iberia; later Turkic and Ottoman expansion through Anatolia and into the Balkans; and, further east, Muslim dynasties consolidating power across parts of South Asia. This was not a tea party with trade agreements. It was war, regime change, tribute systems, and durable social hierarchies that reordered whole regions for centuries.

Even where rule was comparatively tolerant by the standards of its time, it was still rule. Non-Muslim populations were commonly governed as dhimmi, protected yes, but subordinate. They often paid special taxes, faced legal asymmetries, and lived under conversion pressures that, in some contexts, ranged from overt coercion to the long, quiet incentives of status and security. The story differs by place and century. The pattern does not disappear.

Then there is slavery, another topic where our moral accounting often becomes selective. The trans-Saharan, Red Sea, and Indian Ocean systems ran for many centuries and involved very large numbers, though estimates vary widely. They fed household servitude, military slavery, and sex slavery. In the Mediterranean, North African corsairing and “Barbary piracy” produced European captives well into the early modern era. Some historians argue for totals in the low millions across those centuries, though the higher figures are disputed and other scholars propose substantially lower estimates. Either way, the phenomenon is real, and it is rarely integrated into the default Western “slavery story,” which typically means plantations, the Atlantic triangle, and racial chattel bondage. The Ottoman system also included practices like devshirme, the levy of Christian boys for state service, and imperial governance across religious communities was often managed by layered legal categories. Stability was purchased with inequality.

None of this is a claim that Muslim-majority societies are uniquely violent. They are not. It is a claim that they were human societies with power, ambition, and the usual imperial toolkit. Sometimes it was tempered by pragmatism. Sometimes it was sharpened by ideology. It was always shaped by the incentives of rule.

So why does so much Western academic and activist discourse treat “colonialism” as if it is a European invention, or at minimum reserve the hottest moral language for European cases?

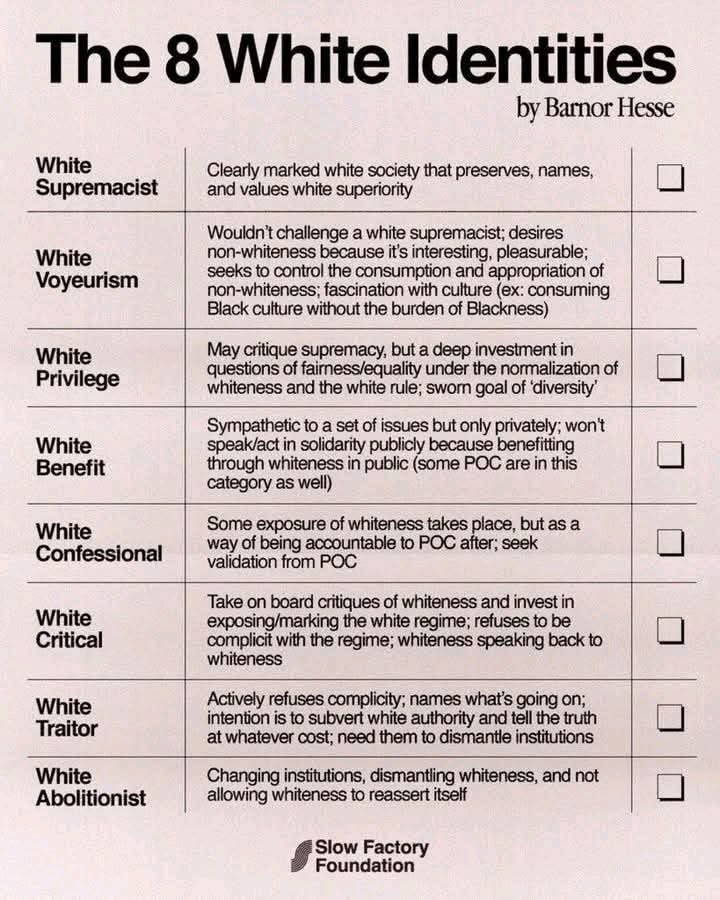

You can see the asymmetry in ordinary cultural output. A student can finish a humanities degree with a fluent vocabulary for “settler-colonialism,” “whiteness,” and “decolonization,” and still never be asked to apply the same conceptual machinery to the Islamization of North Africa, the Turkification of Anatolia, or the Ottoman imperial management of the Balkans. The knowledge exists. The integration often does not.

A few mechanisms are doing the work:

First: the moral map is drawn around modern Western guilt. Universities and NGOs in the West operate inside a story where the primary purpose of historical consciousness is to discipline our civilization. That can be valuable. Self-critique is healthier than propaganda. But it also creates a spotlight effect. If the goal is penance, you do not look for other sinners. You look for mirrors.

Second: postcolonial theory often frames power in a one-directional way. “Colonizer” and “colonized” become fixed identities rather than recurring historical roles. Once the roles harden into moral identities, describing conquest by non-Western empires becomes conceptually awkward. It scrambles the script.

Third: fear of misuse leads to silence. Many scholars and activists worry, often reasonably, that frank discussion of Islamic conquest will be weaponized by bigots. But the answer to bad faith is not selective amnesia. When institutions pre-censor reality to prevent “the wrong people” from citing it, they teach the public a fatal lesson. The gatekeepers do not trust the truth.

Fourth: selective “harm literacy” is now a career incentive. Some topics are safe, rewarded, and legible within current moral fashion. Others are professionally risky, easily smeared, or administratively discouraged. This does not require a conspiracy. It is simply an ecosystem where the costs and benefits are asymmetrical.

The result is not denial, exactly. It is a pattern of omission. Certain conquests are treated as the central moral lesson of history. Others are treated as context, complication, or footnote, no matter how long they lasted, how many people they reordered, or how durable their legal hierarchies proved.

If “colonization” is a real category, and if it means conquest, extraction, tribute, settlement, cultural subordination, and the restructuring of life under new rulers, then it has to apply wherever the pattern appears. Otherwise it is not analysis. It is choreography. 🎭

So here is the question Western institutions should be willing to answer plainly: Why is one empire’s violence treated as the moral template, while another empire’s violence is handled like a reputational hazard, especially when the same victims, religious minorities, conquered peoples, and enslaved captives, are supposed to matter in our universalist ethics?

Because the cost of selective memory is not merely academic. It trains citizens to distrust the referees. When respectable institutions signal, through omissions and asymmetrical moral urgency, that certain truths are too dangerous to say out loud, audiences will go looking for people who will say them. Often crudely. Often with their own distortions. And the gatekeepers will have manufactured the very problem they feared.

This meme only “works” if you stop letting equality smuggle itself in as a moral trump card.

Humans are born uneven. Not in worth—in capacity. Strength, IQ, impulse control, charm, health, family stability, appetite for risk, luck. You can pretend those differences don’t matter, but the moment people are allowed to act freely, they cash out into unequal outcomes. Some people build, some coast, some burn it all down. Freedom is a sorting machine.

So the first half is basically a description: if people are free, they will not end up equal. Not because someone rigged the game. Because the inputs aren’t equal and choices compound.

The second half is the warning: if you demand equality of outcomes, you don’t get it for free. You get it by force. There’s no other mechanism. Outcomes only converge when you stop people from doing the things that produce divergence: earning more, choosing differently, hiring freely, saying what they think, competing hard, associating with who they want, opting out. Equality-as-leveling needs an enforcer. And enforcers don’t show up with a gentle “please.” They show up with rules, penalties, and permission structures—what you’re allowed to do, to say, to keep.

That’s the core trade: freedom produces inequality; outcome equality requires coercion.

Now for the part people always dodge: there are different “equalities.” And conflating them is the whole scam.

- Equal dignity: every person counts as a person. That’s a moral claim. Compatible with freedom.

- Equality before the law: same rules, same due process, no caste exemptions. Also compatible with freedom—arguably required for it.

- Equality of outcomes: everyone ends up in roughly the same place. That’s the one that fights liberty, because it needs constant correction.

Most modern arguments cheat by pointing at the first two and then demanding the third. “If you deny outcome parity, you deny human worth.” No—what you’re denying is the claim that the state (or HR, or the university, or the tribunal) should get to manage adult lives until the spreadsheet looks morally satisfying.

You can have compassion without pretending outcomes should match. You can want upward mobility without confiscating difference. You can care about the bottom without pretending the top is illegitimate.

And yes: sometimes liberty creates ugly inequality. The honest response is to name the costs and argue about which constraints are justified—fraud laws, safety nets, antitrust, disability supports, basic education—without turning “equality” into a magic word that dissolves the question of coercion.

The meme’s point is simple and harsh: if you want equal outcomes, you’re volunteering everyone for supervision. And the people doing the supervising never start by supervising themselves.

The Age of Discovery was not a morality play. It was a capability leap. Between the late 1400s and the 1600s, Europeans built a durable system of oceanic navigation, mapping, and logistics that connected continents at scale. That system reshaped trade, ecology, science, and eventually politics across the world.

None of this requires sanitizing what came with it. Disease shocks, conquest, extraction, and slavery were not side notes. They were part of the story. The problem is what happens when modern retellings keep only one side of the ledger. When “discovery” is taught as a synonym for “oppression,” history stops being inquiry and becomes a single moral script.

What the era achieved

The Age of Discovery solved practical problems that had limited long-range sea travel: how to travel farther from coasts, how to fix position reliably, and how to represent the world in a form that could be used again by the next crew.

Routes mattered first. In 1488, Bartolomeu Dias rounded the Cape of Good Hope and helped establish the sea-route logic that linked the Atlantic to the Indian Ocean world. That made long voyages less like stunts and more like repeatable corridors.

Maps made it scalable. In 1507, Martin Waldseemüller’s map labeled “America” and presented the newly charted lands as a distinct hemisphere in European cartography. In 1569, Mercator’s projection made course-setting more practical by letting navigators plot constant bearings as straight lines. These were not aesthetic achievements. They were infrastructure for a global system.

Instruments and technique followed. Mariners relied on celestial measurement, and Europeans benefited from earlier work in the Islamic world and medieval transmission routes that carried astronomical knowledge and instrument development into Europe. This is worth stating plainly because it strengthens the real point: the Age of Discovery was not magic. It was the synthesis and scaling of knowledge into a logistical machine.

Finally, there was proof of global integration. Magellan’s expedition, completed after his death by Juan Sebastián del Cano, achieved the first circumnavigation. Whatever moral judgments one makes about the broader era, this was a genuine expansion of what humans could do and know.

What it cost

The same system that connected worlds also carried catastrophe.

Indigenous depopulation after 1492 was enormous. Scholars debate the causal mix across regions, but the scale is not seriously in dispute. One influential synthesis reports a fall from just over 50 million in 1492 to just over 5 million by 1650, with Eurasian diseases playing a central role alongside violence, displacement, and social disruption.

The transatlantic slave trade likewise expanded into a vast engine of forced migration and brutal labor. Best estimates place roughly 12.5 million people embarked, with about 10.7 million surviving the Middle Passage and arriving in the Americas. These are not “complications.” They are central moral facts.

And the Columbian Exchange, often simplified into “new foods,” was a sweeping biological and cultural transfer that included crops and animals, but also pathogens and ecological disruption. It permanently altered diets, landscapes, and power.

A reader can acknowledge all of that and still resist a common conclusion: that the entire era should be treated as a civilizational stain rather than a mixed human episode with world-changing outputs.

The Exchange is the model case for a full ledger

A fact-based account has to hold two truths at once.

First, the biological transfer had large, measurable benefits. Economic historians have argued that a single crop, the potato, can plausibly explain about one-quarter of the growth in Old World population and urbanization between 1700 and 1900. That is a civilizational consequence, not an opinion.

Second, the same transoceanic link that moved calories also moved coercion and disease. That is not a footnote. It is part of the mechanism.

The adult position is not denial and not self-flagellation. It is proportionality.

Where “critical theory” helps, and where it can deform

Critical theory is not one thing. In the broad sense, it names a family of approaches aimed at critique and transformation of society, often by making power, incentives, and hidden assumptions visible. In that role, it can correct older triumphalist histories that ignored victims and treated conquest as background noise.

The failure mode appears when the lens becomes total. When domination becomes the only explanatory variable, achievement becomes suspect simply because it is achievement, and complexity is treated as apology. The story turns into prosecution.

One can see the tension in popular history writing. Howard Zinn’s project, for example, explicitly recasts familiar episodes through the eyes of the conquered and the marginalized. That corrective impulse can be valuable. But critics such as Sam Wineburg have argued that the method often trades multi-causal history for moral certainty, producing a “single right answer” style of interpretation rather than a discipline of competing explanations. The risk is that students learn a posture instead of learning judgment.

A parallel point is worth making for Indigenous-centered accounts. Works like Roxanne Dunbar-Ortiz’s are explicit that “discovery” is often better described as invasion and settler expansion. Even when one disagrees with some emphases, the existence of that challenge is healthy. It forces the older story to grow up.

But there is a difference between correction and replacement. Corrective history adds missing facts and voices. Replacement history insists there is only one permissible meaning.

Verdict: defend the full ledger

Western civilization does not need to be imagined as flawless to be defended as consequential and often beneficial. The Age of Discovery expanded human capabilities in navigation, cartography, and global integration. It also produced immense suffering through disease collapse, coercion, and slavery.

A healthy civic memory holds both sides of that ledger. It teaches the costs without denying the achievements, and it refuses any ideology that demands a single moral story as the price of belonging.

References

Bartolomeu Dias (1488) — Encyclopaedia Britannica

https://www.britannica.com/biography/Bartolomeu-Dias

Recognizing and Naming America (Waldseemüller 1507) — Library of Congress

https://www.loc.gov/collections/discovery-and-exploration/articles-and-essays/recognizing-and-naming-america/

Mercator projection — Encyclopaedia Britannica

https://www.britannica.com/science/Mercator-projection

Magellan and the first circumnavigation; del Cano — Encyclopaedia Britannica

https://www.britannica.com/biography/Ferdinand-Magellan

https://www.britannica.com/biography/Juan-Sebastian-del-Cano

Mariner’s astrolabe and transmission via al-Andalus — Mariners’ Museum

European mariners owed much to Arab astronomers — U.S. Naval Institute (Proceedings)

https://www.usni.org/magazines/proceedings/1992/december/navigators-1490s

Indian Ocean trade routes as a pre-existing global network — OER Project

https://www.oerproject.com/OER-Materials/OER-Media/HTML-Articles/Origins/Unit5/Indian-Ocean-Routes

Indigenous demographic collapse (1492–1650) — British Academy (Newson)

Transatlantic slave trade estimates — SlaveVoyages overview; NEH database project

https://legacy.slavevoyages.org/blog/brief-overview-trans-atlantic-slave-trade

https://www.neh.gov/project/transatlantic-slave-trade-database

Potato and Old World population/urbanization growth — Nunn & Qian (QJE paper/PDF)

Click to access NunnQian2011.pdf

Critical theory (as a family of theories; Frankfurt School in the narrow sense) — Stanford Encyclopedia of Philosophy

https://plato.stanford.edu/entries/critical-theory/

Zinn critique: “Undue Certainty” — Sam Wineburg, American Educator (PDF)

https://www.aft.org/ae/winter2012-2013

Indigenous-centered framing (as a counter-story) — Beacon Press (Dunbar-Ortiz)

https://www.beacon.org/An-Indigenous-Peoples-History-of-the-United-States-P1164.aspx

In the world of advocacy and human rights, consistency is more than just a virtue—it’s what gives our principles real meaning. Recently, a comment on social media highlighted a familiar pattern: certain voices who are vocal about one cause may fall silent when similar struggles appear in a different context. It’s a reminder that if we want justice to truly be just, it must be blind to who is involved—applying the same standards to all people, regardless of race, creed, or background.

This isn’t about slamming any particular group; it’s about encouraging all of us to reflect on the importance of consistency. When we advocate for human rights, it’s crucial that we do so across the board. If a group of protesters in one country deserves our solidarity, then those in another country risking their lives for similar ideals deserve it too.

In short, “justice” in quotes should indeed be blind. Not in the sense of ignoring the nuances of each situation, but in the sense of applying our moral standards fairly and universally. By doing so, we strengthen the credibility of our advocacy and remind the world that human rights aren’t selective—they’re for everyone.

Find that tweet inspiration for this post here.

- Universal Child Care Benefit (UCCB, 2006; expanded 2015): Provided $100/month per child under 6 (later $160), plus $60/month for ages 6–17. This universal payment went to all families, delivering $1,200–$1,920 annually per young child to help with living or childcare costs—directly benefiting low-income households without means-testing stigma.

- Working Income Tax Benefit (WITB, 2007; precursor to Canada Workers Benefit): A refundable credit topping up earnings for low-wage workers (up to $1,000 for singles, $2,000 for families), reducing the “welfare wall” and making work more rewarding.

- Registered Disability Savings Plan (RDSP, 2008): Government matching grants up to 300% plus bonds up to $1,000/year for low-income families with disabled members.

- Tax-Free Savings Account (TFSA, 2009): Allowed tax-free growth and withdrawals, helping low-income Canadians build emergency savings.

- Children’s Fitness and Arts Tax Credits (2006–2014 expansions): Up to $500–$1,000 per child, made partially refundable for low-income families.

Other measures included enhanced GST/HST credits, public transit tax credits, caregiver credits, and increased funding for First Nations child welfare. These weren’t trickle-down theories—they were direct transfers and credits that disproportionately aided lower-income groups.Measurable Impact: Poverty and Low-Income Rates DeclinedStatistics Canada data corroborates the effectiveness of these policies:

- Child poverty under the Market Basket Measure (MBM, Canada’s official poverty line since 2018) showed improvement during the Harper years, with overall poverty at 14.5% in 2015 (the benchmark year for federal targets).

- Low-income rates using the after-tax Low Income Measure (LIM-AT) fell from around 13–14% in the mid-2000s to 11.2% by 2015.

- After-tax incomes for the bottom income quintile rose approximately 17% from 2006 to 2015, driven by tax cuts and benefits.

While poverty dropped more sharply after 2015 with the introduction of the Canada Child Benefit (which built on and reformed some Harper-era programs), the Harper government laid groundwork with direct supports that helped stabilize and reduce low-income rates amid the 2008 global recession.Why the Myth Persists—and Why It’s MisleadingCritics often prefer expansive government-run programs (e.g., national daycare) over direct cash to families, viewing the latter as insufficient.

- Original X thread and policy list: https://x.com/GreatBig_Sea/status/1982121517665137029

- Statistics Canada Dimensions of Poverty Hub (MBM and LIM trends): https://www.statcan.gc.ca/en/topics-start/poverty

- Government of Canada background on Harper-era family measures: https://www.canada.ca/en/news/archive/2015/07/today-parents-get-child-care-payments-harper-government-1003359.html

- Wikipedia summary of Universal Child Care Benefit (with sources): https://en.wikipedia.org/wiki/Canada_Child_Benefit

In the end, actions speak louder than slogans. The Harper record shows a commitment to practical support for low-income families—not indifference.

Your opinions…