You are currently browsing the category archive for the ‘History’ category.

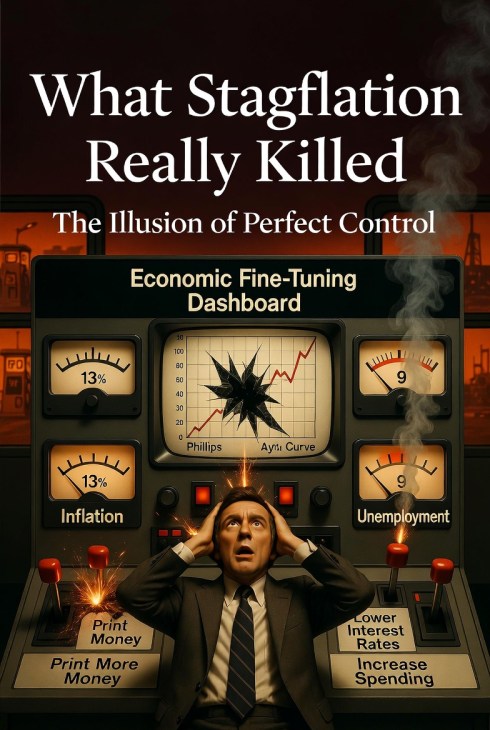

The lesson of 1970s stagflation was not that governments can do nothing. It was that the people running policy understood less than they claimed, and that the tools they trusted were much cruder than advertised. The “Great Inflation” from roughly 1965 to 1982 forced economists and central banks to rethink how inflation, unemployment, and monetary policy actually interact. (Federal Reserve History)

For a time, the postwar consensus rested on a flattering idea. Inflation and unemployment were treated as a manageable trade-off. The Phillips Curve was not just read as a pattern in the data. In practice, it became a governing intuition: if unemployment rose, policymakers could push demand higher and accept somewhat more inflation as the cost. That was the real temptation. A relationship observed under one set of conditions was quietly promoted into an instrument of control. The curve stopped being a caution and became a dashboard. That is where the error entered. As later critiques made clear, any apparent trade-off could break down once expectations adjusted. (Federal Reserve History)

Then the 1970s arrived and the trade-off stopped behaving.

Inflation rose sharply while unemployment also remained painfully high. BLS historical CPI data show annual U.S. inflation at 11.0 percent in 1974, 11.3 percent in 1979, and 13.5 percent in 1980. Federal Reserve History identifies this whole era as the defining macroeconomic crisis of the late twentieth century precisely because it combined persistent inflation with serious economic weakness and forced a rethink of earlier policy assumptions. The old promise had implied that these pressures could be balanced against each other. Instead they arrived together. (Bureau of Labor Statistics)

It is tempting to tell that story too neatly. Some people reduce stagflation to one cause, usually Nixon’s August 1971 suspension of dollar convertibility into gold. That was a major monetary break, and it helped bring the Bretton Woods system to an end. But it was not the whole story. Nixon’s package also included wage and price controls, and the wider period was shaped by multiple interacting forces, including oil shocks and broader inflation dynamics. The point of that complexity is not to rescue the old confidence. It is to bury it. An economy shaped by that many moving parts was never going to be managed with the precision implied by mid-century technocratic rhetoric. (Federal Reserve History)

This is where some critics of monetary manipulation look stronger in retrospect than they did at the time. Austrian economists such as Mises and Hayek had long warned that money and credit are not harmless policy tools. Cheap credit can distort investment. Monetary expansion can scramble price signals. Artificial booms can end in painful correction. There is no need to pretend they possessed a complete script for every feature of 1970s macroeconomics. They did not. But they were directionally right about something central: when policymakers treat money as an instrument of short-run management rather than a framework for stable coordination, they increase the odds of disorder. That warning aged better than the promise of fine-tuning. This is an interpretive judgment, but it is supported by how badly the simpler policy reading of the Phillips Curve fared during the Great Inflation. (Federal Reserve History)

Paul Volcker’s anti-inflation campaign in the early 1980s drove the point home in brutal form. The Federal Reserve’s October 1979 shift to tighter anti-inflation policy helped bring inflation down, but the price of restoring credibility was severe. Federal Reserve History notes that inflation fell sharply after its 1980 peak, while unemployment reached 10.8 percent in late 1982 during the deep 1981–82 recession. That was not the triumph of elegant expert control. It was the bill arriving. Once inflationary disorder hardens, the correction is rarely gentle. (Federal Reserve History)

So what did stagflation actually kill?

Not economics. Not all state action. Not even every Keynesian insight. What it killed was a style of elite confidence. It killed the belief that national economies can be fine-tuned with enough intelligence, enough models, and enough institutional nerve. It killed the conceit that the dashboard is the machine. The language has changed since then. The models are more sophisticated. The temptation is still with us. Every generation of managers wants to believe that this time the controls are better and the uncertainties smaller. The 1970s remain useful because they remind us that policy operates under limits, trade-offs turn ugly, and reality does not care how elegant the model looked on paper. (Federal Reserve History)

Glossary

Phillips Curve

A model associated with a short-run relationship between inflation and unemployment. In practice, many policymakers treated it as if lower unemployment could be purchased with somewhat higher inflation. The 1970s badly damaged confidence in that simple reading. (Federal Reserve History)

Stagflation

A period of high inflation combined with weak growth and high unemployment. The 1970s made the term famous because that combination was supposed to be difficult to sustain under older policy assumptions. (Federal Reserve History)

Fiat money

Money that is not redeemable for a commodity such as gold and instead depends on legal and institutional backing. Nixon’s 1971 decision ended dollar convertibility into gold for foreign governments and central banks. (Federal Reserve History)

Bretton Woods system

The postwar international monetary order in which other currencies were pegged to the U.S. dollar, and the dollar was convertible into gold under the system’s rules. It unraveled in the early 1970s. (Federal Reserve History)

Disinflation

A slowing in the rate of inflation. Prices may still be rising, but less quickly than before. Volcker’s early-1980s policy is a classic U.S. example. (Federal Reserve History)

References / URLs

Federal Reserve History, “The Great Inflation”

https://www.federalreservehistory.org/essays/great-inflation

Federal Reserve History, “Nixon Ends Convertibility of U.S. Dollars to Gold and Announces Wage/Price Controls”

https://www.federalreservehistory.org/essays/gold-convertibility-ends

Federal Reserve History, “Volcker’s Announcement of Anti-Inflation Measures”

https://www.federalreservehistory.org/essays/anti-inflation-measures

Federal Reserve History, “Recession of 1981–82”

https://www.federalreservehistory.org/essays/recession-of-1981-82

Federal Reserve History, “Creation of the Bretton Woods System”

https://www.federalreservehistory.org/essays/bretton-woods-created

U.S. Bureau of Labor Statistics, Historical CPI-U, 1913–2023

https://www.bls.gov/cpi/tables/supplemental-files/historical-cpi-u-202312.pdf

Collin May has published a long, ambitious essay in the C2C journal (Hearts of Darkness: How the Left Uses Hate to Fuel its 21st Century Universal Imperium) on cancel culture, “hate” rhetoric, and the modern left’s moral posture. It is broader than I would write, more philosophical than most readers will tolerate, and occasionally overbuilt. But it names a pattern that matters, and one I return to often here: once “hate” becomes a universal accusation, institutions stop persuading and start policing.

May’s most useful contribution is not just the complaint (“cancel culture exists”) but the mechanism: “hate” stops being a moral description and becomes a category that pre-sorts who may be argued with and who may simply be managed.

That is the issue.

Not whether hatred exists. It does. Not whether some speech is vicious. It is. The issue is what happens when “hate” becomes the default label for disagreement, skepticism, refusal, dissent, or plain moral and factual judgments that cut against elite narratives.

At that point, the term stops describing and starts doing administrative work.

You can watch this happen across the institutions that shape public life: media, HR departments, professional bodies, universities, bureaucracies, and the expanding quasi-legal space around speech regulation. The sequence is familiar. Someone raises a concern about policy, ideology, language rules, school programming, medical ethics, public safety, immigration, religion, or sex-based rights. Instead of answering the argument, the institution reframes the speaker. Not wrong—harmful. Not questioning—spreading hate. Not participating in democratic friction—a threat to social order.

That move changes the rules of engagement. A wrong claim can be debated. A “hateful” claim can be quarantined. Once a claim is reclassified as harm rather than argument, the institutional response changes with it: less rebuttal, more restriction.

This language matters because it is not only moral language. It is managerial language. It justifies deplatforming, censorship, professional discipline, reputational destruction, and exclusion from ordinary civic legitimacy. It creates a class of people whose arguments no longer need to be answered on the merits. It also trains bystanders to confuse moral panic with moral seriousness.

May explains this through a large historical and philosophical genealogy. Fair enough. I am less interested in the full genealogy than in the practical result in front of us. In plain terms: the rhetoric of “hate” is often used to centralize authority in institutions that no longer trust the public and no longer feel obliged to reason with them.

That is one reason trust keeps collapsing.

People can live with disagreement. They can even live with policies they dislike. What they do not tolerate for long is being handled—being told their questions are illegitimate before they are heard. Once citizens conclude that institutions are using moral language as a shield against scrutiny, every future statement gets discounted. Even true statements are heard as spin.

And then the damage compounds. If “hate” is defined so broadly that it includes dissent, genuinely hateful speech becomes harder to identify and confront. The category gets inflated, politicized, and cheapened. Meanwhile, ordinary democratic disagreement becomes harder to conduct without professional or social risk.

That is not a confident free society. It is a managerial one.

Canada is not exempt. We have our own versions of this habit: speech debates reframed as safety debates, policy criticism recoded as identity harm, and public disputes (including over schools, sex-based rights, and even routine civic rituals like land acknowledgements) routed through tribunals, regulators, HR offices, and media scripts instead of open argument. The details vary by case. The mechanism does not. This tactic is not unique to one political tribe, but it is now especially entrenched in progressive-managerial institutions, which is precisely why it has so much reach.

The answer is not to deny hatred exists, or to become casual about cruelty. The answer is to recover civic discipline.

Name actual incitement when it occurs. Enforce existing laws where there are real threats, harassment, or violence. But stop using “hate” as a catch-all for disfavoured views. Stop treating condemnation as a substitute for evidence. Stop teaching institutions that the way to win an argument is to disqualify the speaker.

May quotes Pope Francis on cancel culture as something that “leaves no room.” Whether or not one follows his full historical argument, that phrase captures the operational problem.

A liberal society cannot function if citizens are only permitted to disagree inside moral boundaries drawn in advance by bureaucrats, activists, and legacy media.

The test is simple: can a claim be examined without first being moralized into silence?

If the answer is no, that is not moral confidence. It is institutional insecurity backed by power.

That is the pattern worth naming. And that is why essays like May’s, even when they overshoot, remain worth reading.

References

Collin May, “Hearts of Darkness: How the Left Uses Hate to Fuel its 21st Century Universal Imperium,” C2C Journal (February 16, 2026), https://c2cjournal.ca/2026/02/hearts-of-darkness-how-the-left-uses-hate-to-fuel-its-21st-century-universal-imperium/. (C2C Journal)

Western public culture has no trouble speaking in the language of conquest, so long as the conqueror is European. We can list the crimes of empire in a catechism: invasion, extraction, settlement, forced conversion, slavery, and the slow grinding down of local institutions. We teach it. We ritualize it. We build moral identity around denouncing it.

But history did not outsource conquest to Europe.

From the 7th century onward, Muslim-ruled polities participated in a major, long-running arc of territorial expansion: the early Arab conquests across the Levant, Egypt, North Africa, and Persia; the push into Iberia; later Turkic and Ottoman expansion through Anatolia and into the Balkans; and, further east, Muslim dynasties consolidating power across parts of South Asia. This was not a tea party with trade agreements. It was war, regime change, tribute systems, and durable social hierarchies that reordered whole regions for centuries.

Even where rule was comparatively tolerant by the standards of its time, it was still rule. Non-Muslim populations were commonly governed as dhimmi, protected yes, but subordinate. They often paid special taxes, faced legal asymmetries, and lived under conversion pressures that, in some contexts, ranged from overt coercion to the long, quiet incentives of status and security. The story differs by place and century. The pattern does not disappear.

Then there is slavery, another topic where our moral accounting often becomes selective. The trans-Saharan, Red Sea, and Indian Ocean systems ran for many centuries and involved very large numbers, though estimates vary widely. They fed household servitude, military slavery, and sex slavery. In the Mediterranean, North African corsairing and “Barbary piracy” produced European captives well into the early modern era. Some historians argue for totals in the low millions across those centuries, though the higher figures are disputed and other scholars propose substantially lower estimates. Either way, the phenomenon is real, and it is rarely integrated into the default Western “slavery story,” which typically means plantations, the Atlantic triangle, and racial chattel bondage. The Ottoman system also included practices like devshirme, the levy of Christian boys for state service, and imperial governance across religious communities was often managed by layered legal categories. Stability was purchased with inequality.

None of this is a claim that Muslim-majority societies are uniquely violent. They are not. It is a claim that they were human societies with power, ambition, and the usual imperial toolkit. Sometimes it was tempered by pragmatism. Sometimes it was sharpened by ideology. It was always shaped by the incentives of rule.

So why does so much Western academic and activist discourse treat “colonialism” as if it is a European invention, or at minimum reserve the hottest moral language for European cases?

You can see the asymmetry in ordinary cultural output. A student can finish a humanities degree with a fluent vocabulary for “settler-colonialism,” “whiteness,” and “decolonization,” and still never be asked to apply the same conceptual machinery to the Islamization of North Africa, the Turkification of Anatolia, or the Ottoman imperial management of the Balkans. The knowledge exists. The integration often does not.

A few mechanisms are doing the work:

First: the moral map is drawn around modern Western guilt. Universities and NGOs in the West operate inside a story where the primary purpose of historical consciousness is to discipline our civilization. That can be valuable. Self-critique is healthier than propaganda. But it also creates a spotlight effect. If the goal is penance, you do not look for other sinners. You look for mirrors.

Second: postcolonial theory often frames power in a one-directional way. “Colonizer” and “colonized” become fixed identities rather than recurring historical roles. Once the roles harden into moral identities, describing conquest by non-Western empires becomes conceptually awkward. It scrambles the script.

Third: fear of misuse leads to silence. Many scholars and activists worry, often reasonably, that frank discussion of Islamic conquest will be weaponized by bigots. But the answer to bad faith is not selective amnesia. When institutions pre-censor reality to prevent “the wrong people” from citing it, they teach the public a fatal lesson. The gatekeepers do not trust the truth.

Fourth: selective “harm literacy” is now a career incentive. Some topics are safe, rewarded, and legible within current moral fashion. Others are professionally risky, easily smeared, or administratively discouraged. This does not require a conspiracy. It is simply an ecosystem where the costs and benefits are asymmetrical.

The result is not denial, exactly. It is a pattern of omission. Certain conquests are treated as the central moral lesson of history. Others are treated as context, complication, or footnote, no matter how long they lasted, how many people they reordered, or how durable their legal hierarchies proved.

If “colonization” is a real category, and if it means conquest, extraction, tribute, settlement, cultural subordination, and the restructuring of life under new rulers, then it has to apply wherever the pattern appears. Otherwise it is not analysis. It is choreography. 🎭

So here is the question Western institutions should be willing to answer plainly: Why is one empire’s violence treated as the moral template, while another empire’s violence is handled like a reputational hazard, especially when the same victims, religious minorities, conquered peoples, and enslaved captives, are supposed to matter in our universalist ethics?

Because the cost of selective memory is not merely academic. It trains citizens to distrust the referees. When respectable institutions signal, through omissions and asymmetrical moral urgency, that certain truths are too dangerous to say out loud, audiences will go looking for people who will say them. Often crudely. Often with their own distortions. And the gatekeepers will have manufactured the very problem they feared.

This meme only “works” if you stop letting equality smuggle itself in as a moral trump card.

Humans are born uneven. Not in worth—in capacity. Strength, IQ, impulse control, charm, health, family stability, appetite for risk, luck. You can pretend those differences don’t matter, but the moment people are allowed to act freely, they cash out into unequal outcomes. Some people build, some coast, some burn it all down. Freedom is a sorting machine.

So the first half is basically a description: if people are free, they will not end up equal. Not because someone rigged the game. Because the inputs aren’t equal and choices compound.

The second half is the warning: if you demand equality of outcomes, you don’t get it for free. You get it by force. There’s no other mechanism. Outcomes only converge when you stop people from doing the things that produce divergence: earning more, choosing differently, hiring freely, saying what they think, competing hard, associating with who they want, opting out. Equality-as-leveling needs an enforcer. And enforcers don’t show up with a gentle “please.” They show up with rules, penalties, and permission structures—what you’re allowed to do, to say, to keep.

That’s the core trade: freedom produces inequality; outcome equality requires coercion.

Now for the part people always dodge: there are different “equalities.” And conflating them is the whole scam.

- Equal dignity: every person counts as a person. That’s a moral claim. Compatible with freedom.

- Equality before the law: same rules, same due process, no caste exemptions. Also compatible with freedom—arguably required for it.

- Equality of outcomes: everyone ends up in roughly the same place. That’s the one that fights liberty, because it needs constant correction.

Most modern arguments cheat by pointing at the first two and then demanding the third. “If you deny outcome parity, you deny human worth.” No—what you’re denying is the claim that the state (or HR, or the university, or the tribunal) should get to manage adult lives until the spreadsheet looks morally satisfying.

You can have compassion without pretending outcomes should match. You can want upward mobility without confiscating difference. You can care about the bottom without pretending the top is illegitimate.

And yes: sometimes liberty creates ugly inequality. The honest response is to name the costs and argue about which constraints are justified—fraud laws, safety nets, antitrust, disability supports, basic education—without turning “equality” into a magic word that dissolves the question of coercion.

The meme’s point is simple and harsh: if you want equal outcomes, you’re volunteering everyone for supervision. And the people doing the supervising never start by supervising themselves.

Your opinions…