We’ve done the setup.

First, the feeling: something shifts, guidance changes, and people aren’t sure what they’re looking at. Then the distinction: science has social layers around it, but the core activity—testing models against reality—is constrained by something that isn’t.

Now we get to the part that matters:

What does it look like when that constraint holds—and when it doesn’t?

Because this isn’t theoretical.

It happens in the wild.

The Simple Test People Already Use

Most people don’t talk about models or epistemology.

They do something simpler.

They watch outcomes—whether predictions land, whether explanations hold up, whether the goalposts move after the fact.

When those answers line up, trust builds, even if the conclusion is inconvenient.

When they don’t, something else starts to creep in. Not always a conspiracy. Not always bad faith. But something other than clean model-testing.

What Healthy Science Looks Like

You don’t need a textbook definition. You can recognize it by behavior.

Healthy scientific practice tends to show a few patterns. Claims are tied to specific predictions. Uncertainty is stated, not buried. Errors get corrected without theatrical reversals. Competing models are allowed to fail on their own terms.

It can still be messy. It can still be wrong.

But the direction is clear: toward tighter alignment with reality.

When It Starts to Drift

When social pressures start bending the process, the signals change.

You begin to see claims framed as conclusions first, reasoning second. Heavy reliance on consensus language instead of model performance. Criticism treated as disloyalty rather than error-checking. Revisions framed as narrative continuity instead of correction.

None of these prove corruption on their own.

But together, they form a pattern—and people pick up on that pattern, even if they can’t articulate it.

The Substitution Problem

At the core, something gets swapped.

Instead of:

Does the model work?

You get:

Does the claim align with the current consensus?

That substitution is subtle. It doesn’t announce itself. It shows up in language, in incentives, in what gets amplified and what gets ignored.

And once it happens, the whole system starts to feel different.

Why It Feels Off

People aren’t just tracking claims. They’re tracking consistency—between what was said, what actually happened, and how the update was explained.

When those line up, even large changes feel legitimate.

When they don’t, even small adjustments feel like manipulation.

That’s the gap.

Where Constructivism Gets Its Grip

When people see that gap—shifting language, inconsistent framing, institutional defensiveness—they start looking for explanations.

One of those explanations is:

Maybe truth itself is being negotiated.

That’s where strong social constructivism starts to feel persuasive.

Not because it’s correct.

Because it seems to explain what people are seeing.

The Problem With That Conclusion

It overcorrects.

It takes real failures—communication breakdowns, incentive distortions, institutional bias—and treats them as proof that scientific truth itself is socially constructed.

But those same failures tend to degrade science’s ability to do the one thing that matters:

Track reality.

Bad models don’t suddenly start working because they’re socially supported.

They fail more obviously.

The Constraint Doesn’t Go Away

Even in distorted environments, the underlying constraint is still there.

Predictions still miss. Explanations still break. Reality still refuses to cooperate.

That’s why bad theories eventually collapse, better ones replace them, and the process—however uneven—keeps moving.

Not because institutions are perfect.

Because the world doesn’t bend.

What Actually Makes This Work

If you had to compress what keeps science from collapsing into pure consensus, it isn’t a slogan or an institution.

It’s a set of recurring demands placed on any model that wants to survive.

A model has to say what happens next—and then be judged against it. It has to explain more than its competitors without multiplying assumptions. It has to hold together internally when pushed, not unravel into contradiction. And when reality doesn’t cooperate, it has to adjust rather than dig in.

None of that depends on who proposes the model. None of it depends on which institution backs it.

Those pressures come from the outside.

And they’re what make it very difficult—though not impossible—for social forces to fully take over.

What This Means for the Rest of Us

You don’t need to become a scientist to navigate this.

But you do need a clearer lens.

When you’re looking at a scientific claim, the question isn’t:

Who agrees with this?

It’s:

What would count as this being wrong—and did that test happen?

That’s the difference between evaluating a model and deferring to a position.

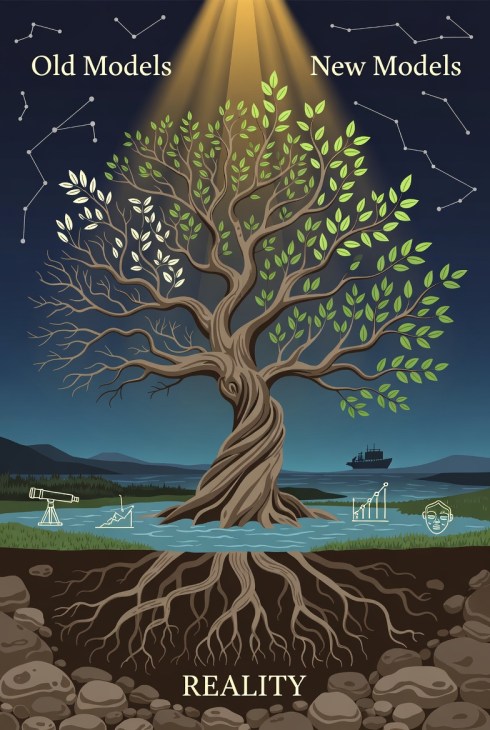

The Line That Still Holds

Science is done by human beings. It sits inside institutions. It’s shaped by incentives.

None of that is in dispute.

But the reason it works—the reason it produces anything usable at all—is that it runs up against something that doesn’t care about any of that.

Reality pushes back.

It doesn’t negotiate. It doesn’t care about consensus. It doesn’t adjust to save face.

And that’s the only reason the entire enterprise holds together.

Final Position

Scientific objectivity doesn’t mean:

- scientists are unbiased

- institutions are clean

- conclusions never change

It means something narrower—and more important.

It means that, at its best, the process is constrained by whether its models survive contact with the world.

Everything else sits on top of that.

Sometimes cleanly.

Sometimes not.

But if you lose that constraint, you don’t just get flawed science.

You get something else entirely.

Further Reading

Core Philosophy of Science (Accessible but Serious)

- Theory and Reality

https://press.uchicago.edu/ucp/books/book/chicago/T/bo3773461.html

Best single overview of how science actually works—models, evidence, and realism vs anti-realism. - The Structure of Scientific Revolutions

https://press.uchicago.edu/ucp/books/book/chicago/S/bo13179781.html

Often cited by constructivists—worth reading directly to see what it does (and doesn’t) claim.

Critiques of Strong Social Constructivism

- Fashionable Nonsense

https://www.penguinrandomhouse.com/books/117184/fashionable-nonsense-by-alan-sokal-and-jean-bricmont/

A direct critique of postmodern misuse of scientific language and relativism. - Stanford Encyclopedia of Philosophy — Social Construction

https://plato.stanford.edu/entries/social-construction/

Clear, neutral breakdown of what “social construction” actually means across domains.

How Science Fails (and Self-Corrects)

- Tuskegee Syphilis Study — Overview

https://www.archives.gov/research/african-americans/individuals/tuskegee-study

A case of ethical and methodological failure—useful for understanding how bias corrupts both morality and knowledge. - The Demon-Haunted World

https://www.penguinrandomhouse.com/books/158581/the-demon-haunted-world-by-carl-sagan/

A practical defense of scientific thinking as a way of testing claims against reality.

Modern Trust & Institutional Context

- Why Trust Science?

https://press.princeton.edu/books/hardcover/9780691179001/why-trust-science

Argues for trust grounded in scientific processes and communities—useful as a counterpoint.

1 comment

Comments feed for this article

May 9, 2026 at 12:21 pm

tildeb

The more common approach to substituting narrative over reality is to claim only the former is moral, that not believing in the narrative is a sign of distortion caused by some character flaw that produces the immorality. This is religious to the core because the methodology to substitute is identical. The red flag is the introduction of a moral metric to a scientific claim. When one encounters this, one is encountering ideology hard at work. The defence is to not go along with it.

LikeLike